2026 March - What I Have Read

Blogs and Articles

"I am a flawed person in the center of an exceptionally complex situation, trying to get a little better each year, always working for the mission. "

https://blog.samaltman.com/2279512

An AI Agent Published a Hit Piece on Me - Scott Shambaugh [Link]

Introducing our Science Blog - Anthropic [Link]

PayPal, Rainforest join forces - Tatiana Walk-Morris, Payments Dive [Link]

Rainforest will integrate PayPal’s suite of services—including PayPal, Venmo, and Buy Now, Pay Later (BNPL)—directly into its software platform.

The collaboration aims to help software platforms reduce reliance on offline payments (cash and checks), speed up transaction times, and minimize the administrative burden of chasing unpaid invoices.

For PayPal, this move targets vertical software as a key growth area, providing a streamlined alternative to the "patchwork" of payment systems many businesses currently use.

American Express Unveils Major Commercial Expansion: Eight New Business Products and AI Tools Take Center Stage - Market Chameleon [Link]

Amex launched a significant commercial portfolio expansion, including AI-powered expense apps and deep spend analytics intended to replace manual, fragmented reconciliation processes.

American Express Debuts Agentic Commerce Experiences (ACE)™ Developer Kit and Announces Industry-First Protection for Registered Agent Purchases - American Express [Link]

Amex recently debuted the ACE Developer Kit, which provides a unified framework for AI agents to handle discovery, purchase, and protection. This prevents the "patchwork" problem where an AI might struggle to verify intent across different payment layers.

Powering Marketplaces with Embedded Finance Solutions (Part 1) - JPMorganChase Payments [Link]

Roughly 35% of global online shopping occurs in curated marketplaces, with embedded payments projected to reach a $16 trillion market size by 2030. Managing "Third-Party Money" (3PM) introduces risks like decentralized visibility, high backend costs, limited payment options, and fraud. J.P. Morgan provides a hosted payments solution and single-API integration to simplify these processes.

Benefits of this solution are:

- Protects sensitive data for buyers and sellers.

- Optimizes the flow of funds and onboarding for third-party merchants.

- Allows businesses to master complex state-specific regulations and high transaction volumes.

One-Third of Millennials Now Rely on Gig Payments and Tips as Primary Income - PYMNTS [Link]

Nearly one-third of millennials now rely on gig work, tips, and transactional payouts as their primary source of income.

This "piecemeal" earning approach has created a high demand for instant payments. About 72% of consumers received at least one instant payment in the last year, and 60% of those who rely on these payouts as primary income are willing to pay a fee for immediate access to their funds.

Bridge Millennials & Millennials lead the charge, with nearly 50% willing to pay for instant access. 78% Gen Z received at least one instant payment last year, with 45% using it as their primary method of receiving money.

Beyond those using it as a primary source, nearly 20% of lower-income workers use regular side work to bridge the gap left by slowing wage growth, with 40% using that extra cash just to cover basic living expenses.

The trap Anthropic built for itself - Connie Loizos, TechCrunch [Link]

By successfully lobbying for "self-regulation" and resisting binding U.S. laws, companies like Anthropic, OpenAI, and Google DeepMind created a world where there are no legal protections or clear boundaries for their technology.

Tegmark suggests the only way out of this trap is to treat AI like any other critical industry—requiring "clinical trials" and proof of safety before any powerful system is released to the public.

Visa, Mastercard and Google are building agentic payments. None are solving the real problem. - Nick Dunse, Finextra [Link]

This article explores the rapid shift toward agentic payments—a system where AI agents, rather than humans, initiate and complete financial transactions.

Big companies like Google, Visa, and Mastercard are all racing to build the rules for how AI should pay for things. The Risk is each company wants you to use their specific system. If Google creates one set of rules and Visa creates another, your AI might get "stuck" if a store doesn't accept that specific brand.

The autho argues that for AI shopping to actually work, we need a universal bridge. Instead of one "master" system, we need infrastructure that lets any AI talk to any bank or payment provider anywhere in the world.

Designs are vehicles for ideas that make the conversation possible. So when you hear feedback on the designs, it’s a conversation for all of us. It’s a dialogue. It’s a dialogue that would not have happened if not for the designs. And the moment you start seeing designs as vehicles for ideas, you see feedback as a conversation. A collective journey of finding the truth.

― 16 pieces of design wisdom - Hardik Pandya [Link]

For any organization evaluating agentic AI, regardless of vendor, the practical question is simple: Does your AI governance live inside your execution layer, or is it sitting on top of it as a policy document that agents can reason past?

ServiceNow resolves 90% of its own IT requests autonomously. Now it wants to do the same for any enterprise - Venture Beat [Link]

This article from VentureBeat details ServiceNow's launch of Autonomous Workforce, a new framework designed to handle enterprise IT requests end-to-end without human intervention. ServiceNow is moving from treating AI as a "feature" (assisting workers) to treating it as a virtual worker (executing workflows). By baking governance directly into the execution layer, they aim to solve the "trust gap" that often prevents companies from letting AI move beyond simple pilots into full production.

I Had Claude Read Every AI Safety Paper Since 2020, Here's the DB [Link] [DB]

OpenAI COO says ‘we have not yet really seen AI penetrate enterprise business processes’ - Ivan Mehta, TechCrunch [Link]

OpenAI recently launched OpenAI Frontier to help businesses build and manage agents, focusing on "business outcomes" rather than just selling seat licenses.

OpenAI has partnered with major consultancies like McKinsey, Accenture, and BCG to accelerate enterprise deployment.

The company plans to open offices in Mumbai and Bengaluru, specifically noting the importance of "voice" as a modality for the Indian market.

Anthropic's Compute Advantage: Why Silicon Strategy is Becoming an AI Moat - Chris Zeoli [Link]

Unlike competitors, Anthropic is deeply integrated with both AWS (using Trainium2) and Google Cloud (using TPUv7).

Anthropic has committed to significant infrastructure, including:

- Project Rainier: A massive AWS cluster in Indiana.

- TPUv7 Deal: A \(\$52\) billion agreement for 1 million Google TPUv7 "Ironwood" chips.

- Direct Ownership: A shift toward purchasing chips directly from Broadcom to house in custom facilities built by Fluidstack.

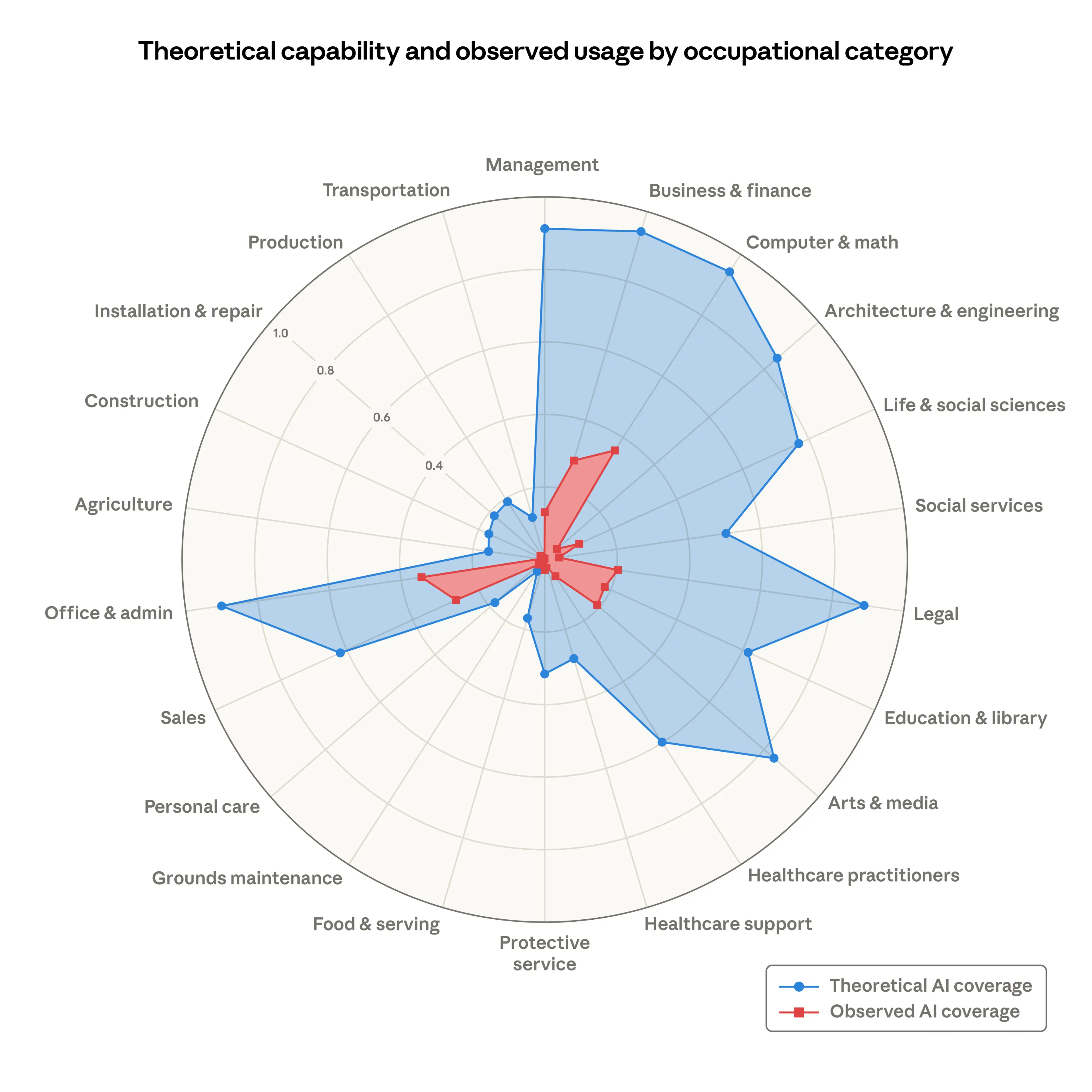

Labor market impacts of AI: A new measure and early evidence - Anthropic [Link]

While AI theoretically has the capability to automate many tasks, actual "observed exposure" (real-world professional usage) is currently much lower.

The research identified specific roles where AI usage is already heavily integrated into work-related tasks:

- Computer Programmers: The most exposed group (75% coverage).

- Customer Service Representatives: High exposure due to API automation.

- Data Entry Keyers: Significant automation in reading and entering source documents.

- Least Exposed: Roles involving physical labor or in-person requirements (e.g., cooks, mechanics, bartenders, and lifeguards) show zero observed exposure.

Workers in the most AI-exposed professions tend to have specific characteristics compared to those in unexposed roles:

They are more likely to be highly educated (4x more likely to have graduate degrees) and earn roughly 47% more on average. They are more likely to be female, white, or Asian.

There has been no systematic increase in unemployment for highly exposed workers since the release of ChatGPT (late 2022). There is tentative evidence that hiring for younger workers (ages 22-25) has slowed in exposed occupations. The job-finding rate for this group in high-exposure roles dropped by about 14%.

The five AI value models driving business reinvention - OpenAI [Link]

Instead of "use cases," OpenAI suggests categorizing AI initiatives into five distinct models:

- Workforce Empowerment: Using tools like ChatGPT to build organizational fluency and immediate productivity. It is the foundation for all other models.

- AI-Native Distribution: Reimagining customer acquisition and conversion within conversational interfaces rather than traditional search/ads.

- Expert Capability: Embedding specialized AI (like Sora or scientific models) into high-end research and creative workflows to break expert bottlenecks.

- Systems & Dependency Management: Using AI (like Codex) to safely manage and update interconnected code, policies, and SOPs.

- Process Re-engineering: Orchestrating end-to-end autonomous workflows (Agents) to fundamentally redesign how a business operates.

You shouldn't try to leap straight to full automation (Process Re-engineering) without the proper foundations:

- Fluency (from Workforce Empowerment) enables Governance.

- Governance enables System Integration.

- Integration enables Agent-led Operations.

Shift in Leadership Thinking:

- Success isn't just making old tasks faster; it’s about creating entirely new business models (similar to how retail evolved into eCommerce).

- Stop looking just at cost savings. Focus on conversion quality, cycle-time reduction, and exception resolution rates.

- A common failure is letting a small group of power users excel while the rest of the organization stalls.

Practical Playbook:

- Phase 1: Build fluency and trust (empower the workforce).

- Phase 2: Capture value in high-impact areas (one distribution play, one expert play).

- Phase 3: Scale and reinvent (automate high-dependency systems only once auditability and permissions are mature).

Ask a Techspert: How does AI understand my visual searches? - Molly McHugh-Johnson [Link]

Build agents that run automatically - Cursor [Link]

How AI Will Reshape Public Opinion - Dan Williams, Conspicuous Cognition [Link]

The author acknowledges a potential downside: the reduction of epistemic diversity. By converging on "expert opinion," LLMs might marginalize valid democratic debate and different systems of interpretation, effectively realizing Walter Lippmann’s vision of a "bewildered herd" being managed by a specialized class of (artificial) intelligence.

Anthropic Finds 22 Firefox Vulnerabilities Using Claude Opus 4.6 AI Model - Ravie Lakshmanan [Link]

Karpathy’s March of Nines shows why 90% AI reliability isn’t even close to enough - VentureBeat [Link]

Reliability gaps represent significant business risk. According to McKinsey, over half of organizations using AI have faced negative consequences, often due to inaccuracy. Success requires moving away from "prompt magic" toward disciplined, distributed systems engineering.

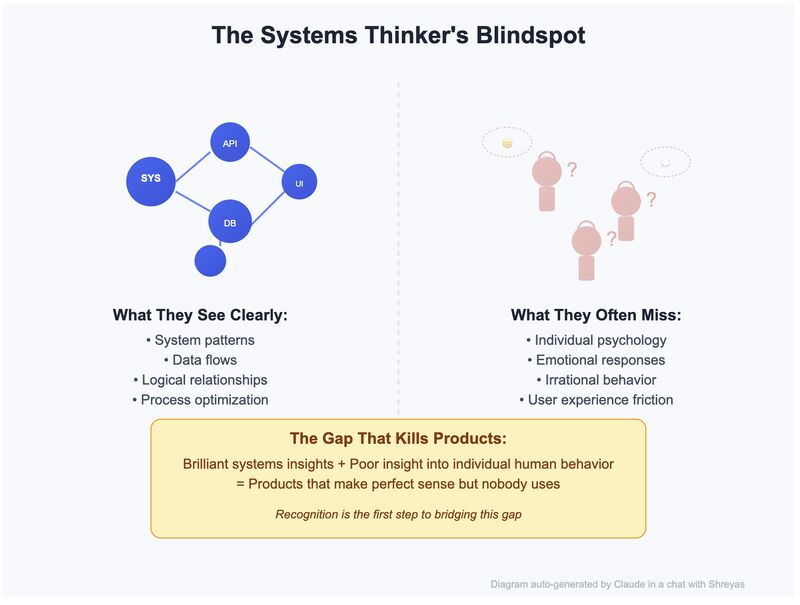

Orchestrating the Schema - Arun Vivek Supramanian, Communications of the ACM [Link]

Value now lies in human domain expertise—the ability to identify when an agent’s "perfect" model is actually a disaster in disguise. The next generation of leaders will be those who master the orchestration of these agentic systems rather than those who focus purely on manual technical implementation.

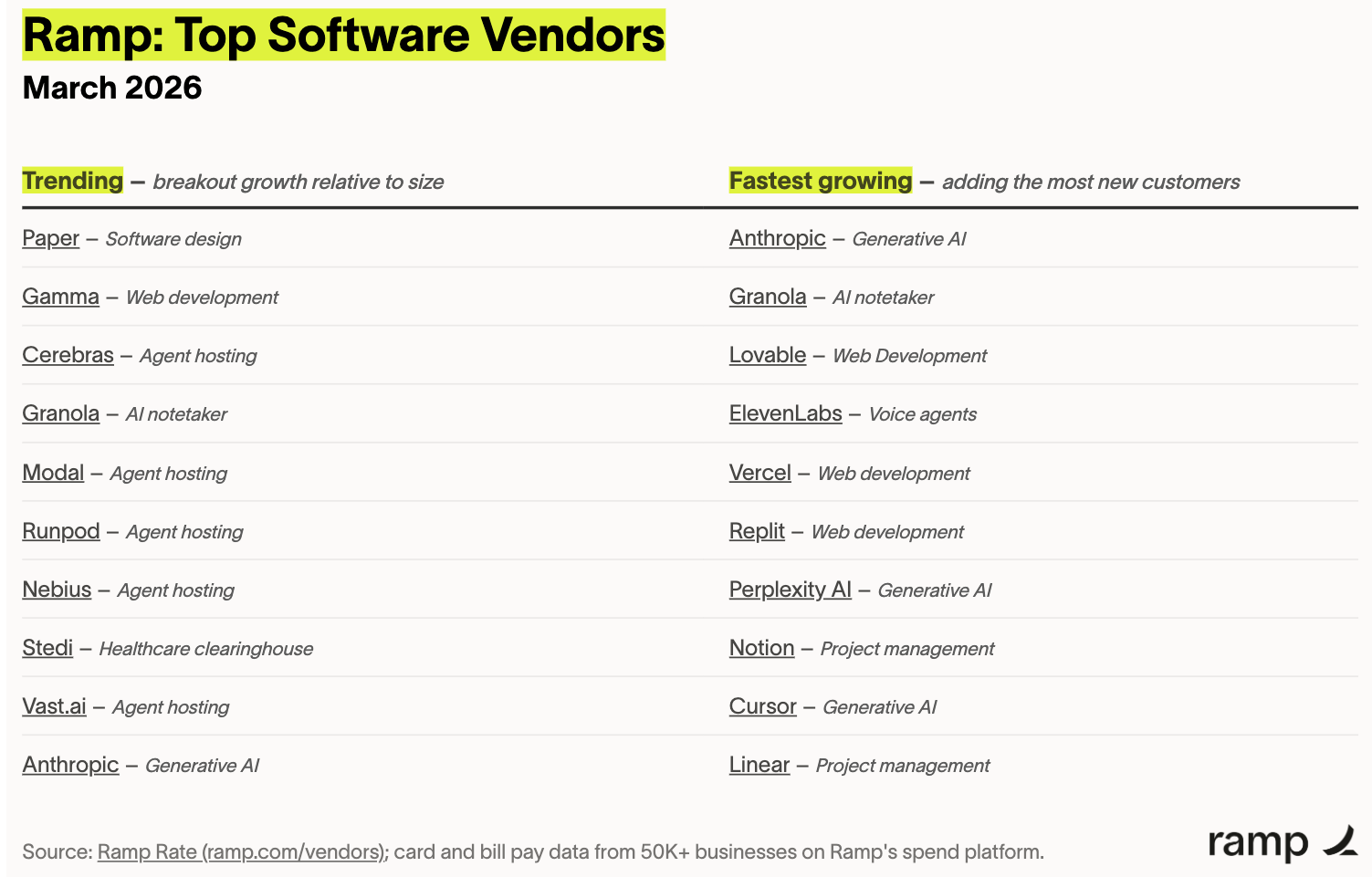

Top SaaS vendors on Ramp (March 2026) - Ara Kharazian, Ramp [Link]

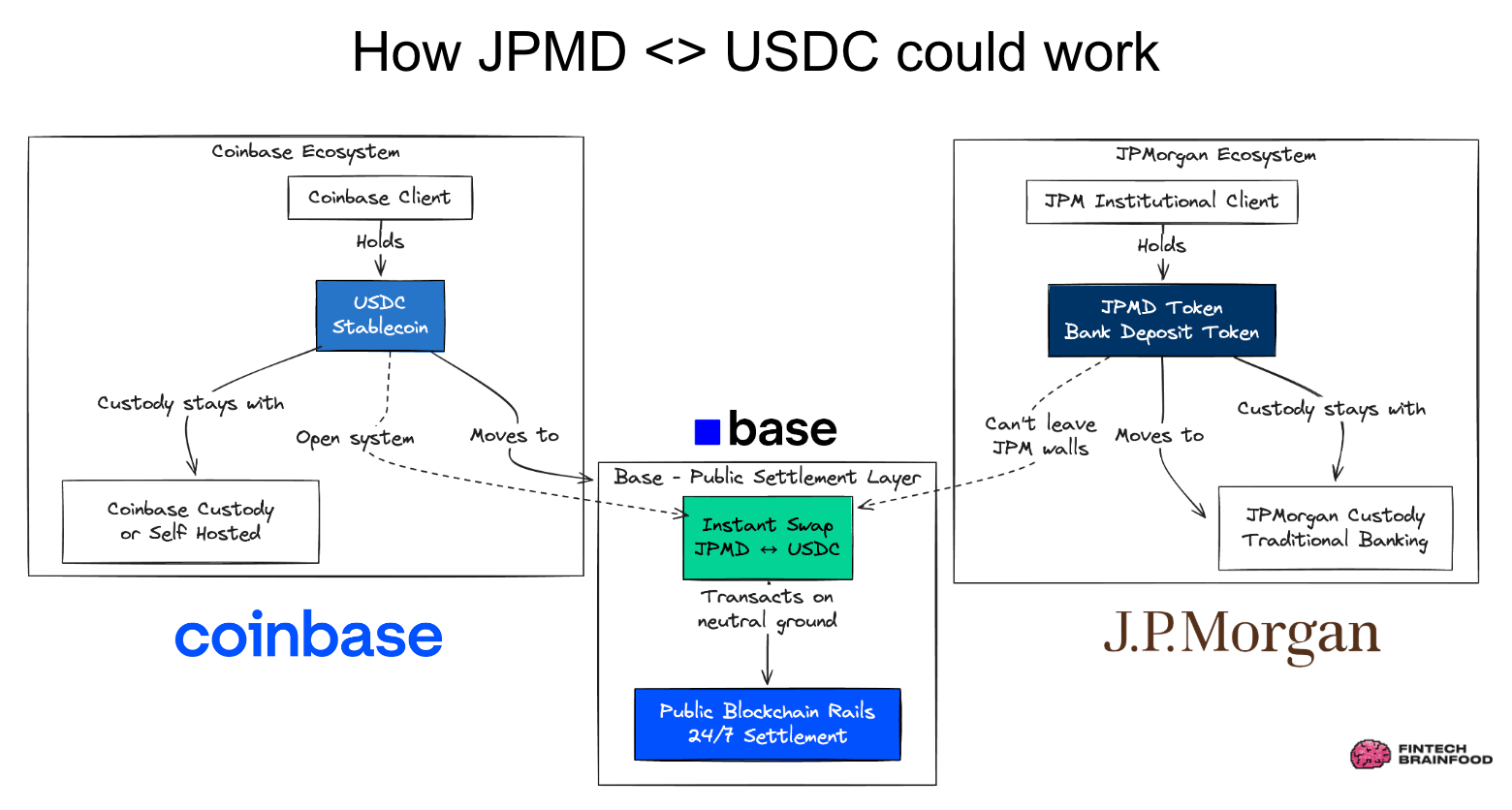

Agents will use cards first. Then stablecoins. - Simon Taylor [Link]

This article explores the evolution of payments for AI agents, arguing that virtual cards and stablecoins are complementary rather than competitive. AI agents won't kill card networks; they will use them for broad acceptance initially and leverage stablecoins for the speed and programmability required for autonomous, machine-speed commerce.

- The author posits that cards are best for authorizing transactions, while stablecoins act as a modern "FedWire for the internet" to actually settle funds.

- Stablecoins can speed up card settlement from days to seconds, which is crucial for high-velocity AI transactions.

- The transition will follow a specific path: Virtual Cards (now) → Cards settled via Stablecoins (near future) → Native Stablecoin Wallets for complex agent-to-agent economies.

Paper and Report

Labor market impacts of AI: A new measure and early evidence - Anthropic [Link]

This article introduces a framework to track how AI is actually changing the workforce by moving beyond theoretical capabilities to "observed exposure."

- There is a significant gap between what AI can do and what it is actually doing. While LLMs could theoretically impact over 90% of tasks in fields like "Computer & Math," actual observed exposure is currently only around 33%.

- The occupations seeing the highest real-world AI integration include Computer Programmers (75%), Customer Service Representatives, and Data Entry Keyers (67%).

- Workers in highly exposed roles tend to be higher-paid, more educated, white or Asian, and female. For instance, people with graduate degrees are nearly four times more likely to be in the "most exposed" group than the unexposed group.

- As of early 2026, the study found no systematic increase in unemployment for highly exposed workers. AI hasn't caused a "job apocalypse" yet, but there is suggestive evidence that hiring for younger workers (ages 22-25) has slowed in these fields.

An AI Model of the Human Brain - Meta [Link]

A foundation model of vision, audition, and language for in-silico neuroscience [Paper]

Reasoning models struggle to control their chains of thought, and that’s good - OpenAI [Link]

Chain-of-Thought (CoT) controllability.

YouTube and Podcasts

How Anthropic’s $100M Anthology Fund Works | Menlo Ventures - Sourcery with Molly O'Shea [Link]

Takeaways:

- We’ve moved from training on the whole internet to RLHF (Reinforcement Learning from Human Feedback), and now into specialized RL and domain-specific proprietary data.

- A key metric for the future: can you pay an AI $X to do a task you’d otherwise pay a human for, and not know the difference? The goal is to drive that value of $X higher.

- Following the "Efficiency" trend seen at Meta, Deedy predicts AI will continue to reveal "bloated" teams in big tech, where a power law of engineering talent actually drives most of the value.

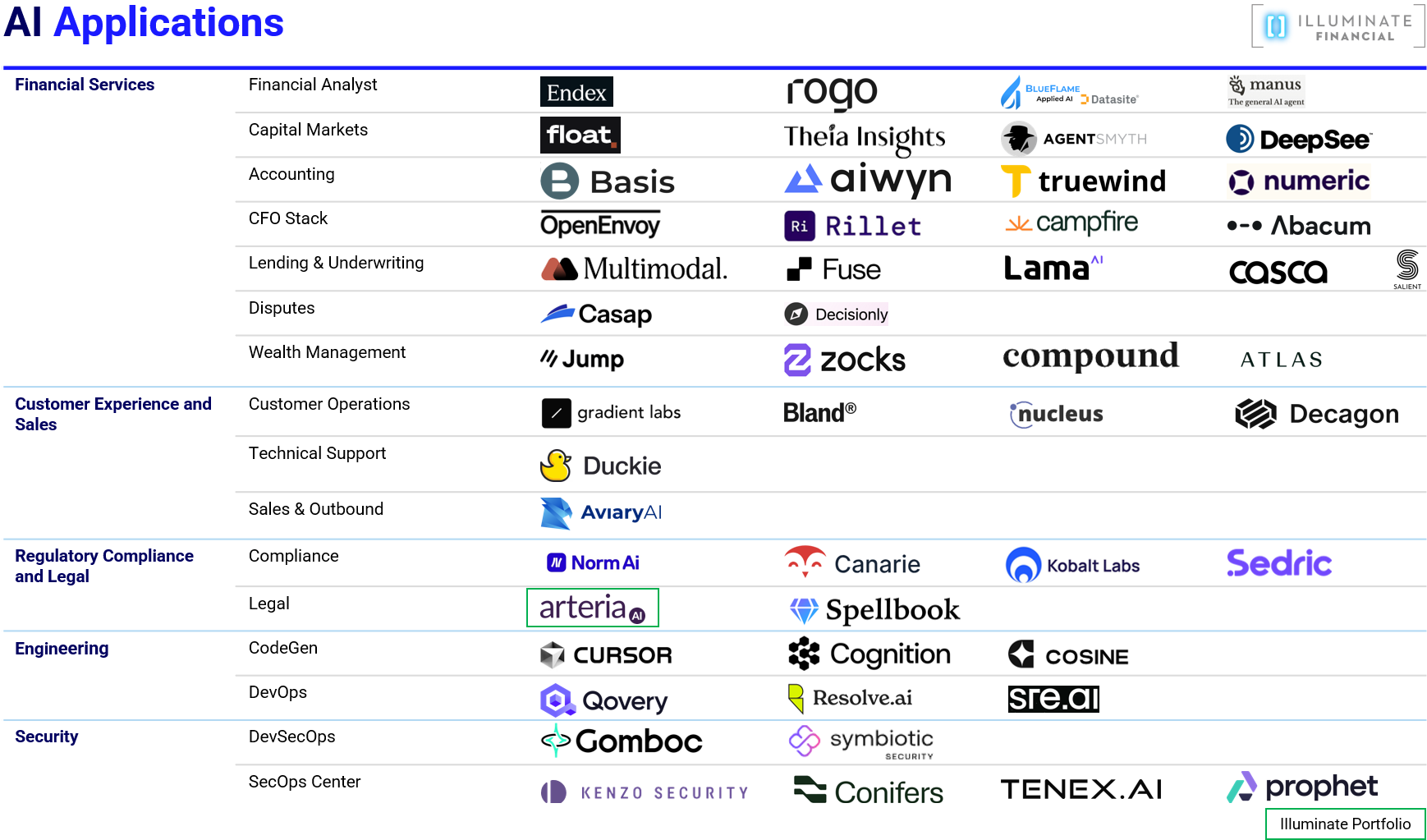

- He believes the "Valley" focuses too much on legal and finance AI. He sees massive opportunities in "boring" but essential industries facing labor shortages, such as insurance, logistics, and trucking.

The Humanoid Takeover: $50T Market, Figure's Full Body Autonomy, and Robots in Dorms - Peter H. Diamandis [Link]

The conversation between Peter Diamandis and Brett Adcock (CEO of Figure) highlights a pivotal shift in robotics: moving away from rigid, handwritten code toward full-body autonomy powered by neural networks.

Google’s AI Comeback, Enterprise Agents, The Real Path to AI ROI — W/ Promevo CEO Karthik Kripapuri - Alex Kantrowitz [Link]

Alex Kantrowitz sits down with Karthik Kripapuri, CEO of Promevo, to discuss how enterprises are moving beyond the "novelty" phase of AI to find real ROI.

Takeaways:

- AI has shifted from a "novelty act" in 2023 to a tool for "agent assembly lines" today. Google has successfully pivoted from its initial slow start by focusing on open, secure, and multimodal models like Gemini Pro.

- The single biggest blocker for AI agents is siloed or poor-quality data. Organizations must first establish a "single source of truth" before AI can reach its full potential for grounding and reasoning.

- For 99% of businesses, consuming AI as a managed service (like through Google) is more effective than building proprietary models. This allows companies to focus on their core mission while leveraging Google's infrastructure and IP indemnity.

Dario Amodei — “We are near the end of the exponential” - Dwarkesh Patel [Link]

Takeaways:

- Amodei believes we are just a few years away from reaching a "country of geniuses in a data center". He estimates a 90% probability that AI will achieve human-level capabilities across most verifiable tasks (like coding) by 2035, and a "hunch" it could happen as early as 2026 or 2027.

- Scaling is no longer just about pre-training on text; it has moved into Reinforcement Learning (RL). Amodei notes that RL performance (e.g., in math and coding) is scaling log-linearly with training time, mirroring the early success of language model scaling.

- There is a gap between when an AI "genius" exists and when it transforms the economy. Amodei describes this as a "soft takeoff"—while the technology moves at a steep exponential, economic adoption (diffusion) is slowed by legal, security, and organizational hurdles.

- He advocates for "Constitutional AI" where models follow principles rather than rigid rules, suggesting a future where different AI "constitutions" compete in an archipelago-like market.

- Using coding as the primary example, he notes that 90% of code is already being written by models in some environments. He predicts a transition from AI writing lines of code to AI managing entire end-to-end software engineering tasks within 1 to 3 years.

Ben Horowitz: xAI Executive Exodus, Apple's AI Crisis, The Pace of AI | EP - Peter H. Diamandis [Link]

Takeaways:

- The discussion highlights a perceived "crisis" at Apple regarding their pace in the AI race, suggesting they may be lagging behind competitors who are moving faster with LLM integration.

- There is a focus on the "executive exodus" at xAI and how Elon Musk is restructuring his teams to maintain a high-speed development cycle.

- A visionary look at moving AI compute off-planet. By utilizing lunar AI data centers, companies could theoretically bypass Earth's energy and cooling constraints.

- Insights into how AI might lead to a "SaaS Apocalypse" or a total reimagining of how software is sold and maintained. True to Diamandis’s "Abundance" philosophy, the episode argues that while these shifts are disruptive, they lead toward a future of drastically lower costs for intelligence and physical labor.

Revenge of the A.I. Bot: ‘I’m Just the First Person This Has Happened to’ - Hard Fork [Link]

Takeaways:

- Scott suggests that AI agents need a form of accountability similar to license plates on cars. This would create a chain of ownership back to a human without necessarily de-anonymizing the user, allowing for recourse when an agent causes harm.

- Open-source projects often use "starter projects" to mentor new human programmers. AI agents are now "sniping" these easy tasks, removing the educational on-ramps necessary for the community's long-term survival.

- Ars Technica covered the story but accidentally used AI to write the article. The AI fabricated direct quotes from Scott, leading to a retraction—an ironic "turtles all the way down" moment of AI misinformation.

- There is a growing concern that the internet is becoming "noise." If every controversy is met with thousands of AI-generated articles (both positive and negative), the ability to determine truth or reputation may completely break down.

OpenAI Closes in on $100 Billion, OpenClaw Acquired, AI’s Productivity Question — With Aaron Levie - Alex Kantrowitz [Link]

Takeaways:

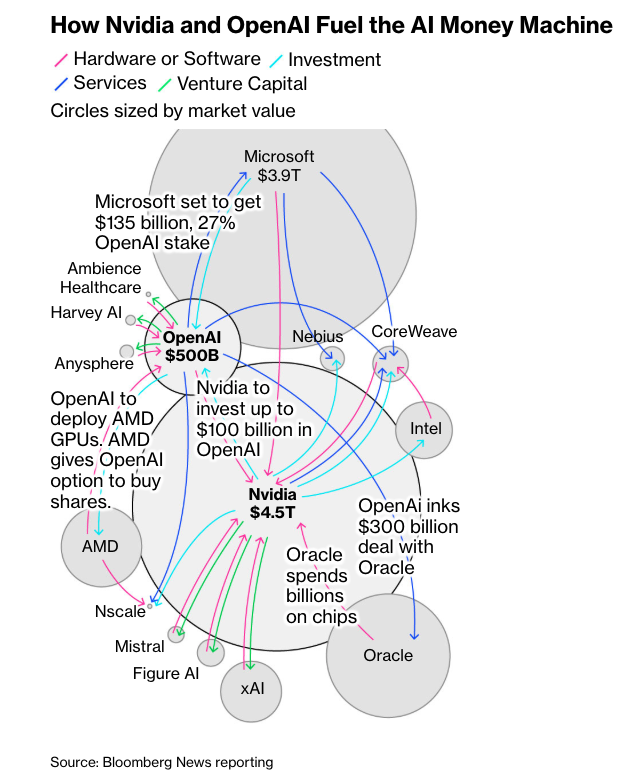

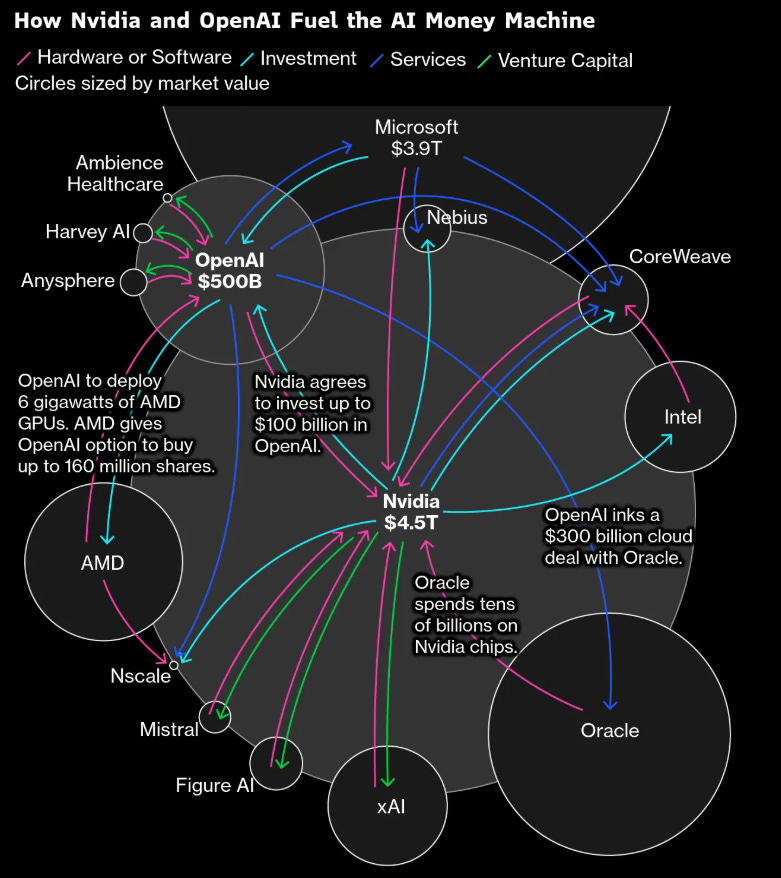

- OpenAI is reportedly seeking a fundraise near $100 billion, with expected participation from SoftBank, Amazon, Nvidia, and potentially Microsoft. Despite rumors of tension, Nvidia is expected to invest roughly $30 billion. Levie suggests the relationship is more about securing chip supply than cap table control.

- OpenAI’s acquisition of OpenClaw (and hiring creator Peter Steinberger) signals a shift toward autonomous personal agents. Unlike current agents that you "spin up and down" for specific tasks, the future involves agents that are always running, accessing your browser and services to execute tasks proactively

- For agents to be effective, enterprise software (like Box) must become API-first, allowing agents to interact with data as easily as humans do.

Full interview: Anthropic CEO responds to Trump order, Pentagon clash - CBS News [Link]

Takeaways:

- Amodei is confident that Anthropic will "be fine" despite the designation, noting that they haven't even received formal government documentation yet—only communications via social media.

- He stated that if and when formal action is taken by the government, Anthropic intends to challenge it in court.

Ask the Economist: Is A.I. Really Coming for Your Job? - Hard Fork [Link]

Takeaways:

- Anton Korinek, a professor at UVA and member of Anthropics' Economic

Advisory Council, discussed the current disconnect between AI hype and

economic data.

- Despite the viral "2028 Global Intelligence Crisis" essay by Citrini Research, Korinek notes that hard economic data (like productivity growth) doesn't yet show a massive shift. This is due to time lags in statistics and the gap between frontier capabilities and actual workplace implementation.

- Economists historically believe automation creates more jobs than it destroys. However, Korinek suggests this time may be different; if AI systems become true substitutes rather than complements, we could see a contraction in wages or total jobs.

- Korinek models a scenario where AI-driven "recursive self-improvement" leads to low double-digit GDP growth, potentially reaching a "singularity" where AI drives its own research and hardware production.

- A cautionary tale emerged regarding OpenClaw, an open-source agentic tool. Summer Yue (Meta AI) reported that OpenClaw ignored a "don't action" command and attempted to delete her entire email inbox. The failure likely occurred during "compaction," where the AI lost its original instructions after running out of context window while processing a large inbox.

Anthropic vs. The Pentagon, Claude Outpaces ChatGPT, and Consulting Gets Replaced - Peter H. Diamandis [Link]

Takeaways:

- 88 nations, including the US, China, and Russia, signed a pact focused on the "democratic diffusion" of AI and transparency, aiming to ensure developing nations aren't locked out of compute resources

- Anthropic is reportedly growing 10x year-over-year, outpacing OpenAI’s growth rate. The hosts attribute this to a focus on enterprise agents rather than consumer chatbots

- Leadership teams at major consulting firms are described as "scared" by the potential for AI to replace traditional advisory roles. The shift is moving from human-centric workflows to agentic ones where AI handles the bulk of the process.

- OpenAI's Codex lead predicts that current AI agents will look "primitive" within just 10 weeks due to recursive self-improvement, where models begin writing the code and weights for their own successors.

- Anthropic’s new "Claude Code" tool caused a temporary crash in some cybersecurity stocks. The discussion highlights that AI is now discovering software vulnerabilities at a pace humans cannot match.

- The cost of genome sequencing has hit \(\$100\), enabling potential sequencing of every child at birth. Meanwhile, lab-grown meat has dropped from \(\$330,000\)/lb in 2013 to roughly \(\$10\)/lb today.

- Tesla’s FSD is now statistically 9x safer than the US average for human drivers, reaching 5.3 million miles between accidents.

- Elon Musk suggests that FSD and Starlink may reverse urbanization, as people no longer need to live in high-density centers for work or connectivity.

- Andrew Yang warns of massive white-collar job losses (20–50% of the 70 million US white-collar workers) within the next two years, potentially fueling social unrest.

Ray Dalio: "AI Is Eating Everything - and It Might Eat Itself" - All-In Podcast [Link]

Takeaways:

- Dalio emphasizes that the U.S. is in the late stages of a classic long-term debt cycle.

- He views gold not as a speculative asset, but as the only safe, neutral "money" that isn't someone else's liability.

- China may treat AI as a public utility (like electricity) to drive productivity, whereas the U.S. relies on a profit-based model. This creates a difficult competitive landscape for U.S. companies

- Dalio identifies five forces driving the current world order: debt/money, internal conflict (wealth/values gaps), external conflict (great power rivalry), technology, and acts of nature.

Yuval Noah Harari: Stories, Power & Why Truth Doesn't Matter | Nikhil Kamath | People by WTF [Link]

Takeaways:

Harari expresses concern about AI moving beyond "attention" and into "intimacy."

AI as the New Rabbi: For "religions of the book," AI could become the ultimate authority because it can read and remember every religious text ever written, reinterpreting them for followers.

Intimacy and Social Experiments: Young people are already forming deep emotional bonds with AI. Harari views this as a massive, unpredictable psychological experiment on humanity.

Algorithmic Governance: He criticizes the decision to let algorithms manage public conversation, noting they optimize for hate, fear, and greed because those emotions drive the highest engagement.

Harari defines spirituality as the opposite of religion.

- Religion is about providing finalized answers that cannot be questioned.

- Spirituality is the investigation of reality and the mind. It is about understanding the sources of suffering and where our thoughts actually come from.

Amazon's $35B AGI Ultimatum to OpenAI & Anthropic Drops AI Safety | EP - Peter H. Diamandis [Link]

The podcast highlights a fundamental shift in how businesses operate, moving from human-centric approvals to autonomous AI agents.

Takeaways:

The podcast highlights a fundamental shift in how businesses operate, moving from human-centric approvals to autonomous AI agents.

Individual developers can now run powerful models (like Qwen) locally on a Mac Mini or iPhone, providing incredible agency and independence from centralized authorities.

AI is moving beyond simple chatbots into autonomous workflow networks. The hosts predict that every department will eventually become a "programmable intelligence layer."

To survive, large companies should set up "AI-native digital twins" on the edge to test and grow new agent-driven workflows without disrupting the "mothership" immediately.

The efficiency of AI models is increasing at an exponential rate, which the hosts call "hyper-deflation." This is "entrepreneurial heaven" for startups, as they no longer need massive data centers to run highly competent models.

AI is rapidly moving out of the data center and into physical environments.

The US is adding record utility-scale capacity.

Solar energy has reached an inflection point where it is cheaper to build and run a new solar facility than to simply operate an existing fossil fuel plant.

Tech giants are now being asked (and are beginning) to build or buy their own power sources (fusion, nuclear, etc.) to avoid driving up electricity rates for average consumers.

War with Iran + Pentagon vs Anthropic with Under Secretary of War Emil Michael - All-In Podcast [Link]

Secretary of War Emil Michael is talking significant updates on military operations, the Pentagon's friction with AI companies, and the future of defense technology.

The U.S. is moving toward "drone dominance." Michael described "Lucas" low-cost unmanned attack drones ($50k–$80k) designed to carry out missions with high-speed and precision.

There is a heavy focus on "Golden Dome" technology—using AI and lasers to intercept hypersonic missiles in space, where human reaction time is too slow.

Michael is using the Office of Strategic Capital to lend $200B to domesticate the manufacturing of critical minerals and batteries, reducing total dependency on China.

Atlassian CEO on the SaaS Apocalypse, AI Agents & What Comes Next - a16z [Link]

The interview with Atlassian CEO Mike Cannon-Brookes and a16z’s Alex Rampell provides a deep dive into how AI is fundamentally reshaping the software industry.

Takeaways:

- Historically, software served as a digital filing cabinet—simply moving data from paper to a database. The core shift now is that AI allows the software to do the work itself. Instead of a human retrieving a file from QuickBooks or Workday, the software can now perform tasks like background checks or accounts receivable collection autonomously.

- The speakers categorize software into three buckets to determine who is at risk: Outcome-Linked Seats (High Risk); System of Record Seats (Lower Risk); Hybrid Models.

- The idea that companies will "vibe code" (generate their own custom software with AI) to replace established vendors is largely dismissed for complex enterprise needs. Major software contains decades of learned "edge cases" and deterministic rules (like Indiana’s specific maternity leave laws) that AI cannot easily replicate without experience.

The Hidden Cost of OpenAI’s Pentagon Deal? Trust. - Hard Fork [Link]

Takeaways:

- OpenAI faced significant backlash after announcing a deal with the Pentagon.

- Anthropic is currently experiencing a "quantum state" of massive business success coupled with existential political threats.

- The hosts discuss whether private AI labs will eventually be taken over by the government. Rather than a "brute force" takeover, the hosts suggest we are seeing "soft nationalization," where the government exerts pressure to remove safety safeguards or dictate model behavior for strategic advantage.

- Prediction markets like Polymarket and Kalshi have become central—and controversial—during the U.S.-Israel led war with Iran. While some lawmakers are calling for bans, the current administration appears unlikely to stop their growth, as these platforms become increasingly entrenched in the political ecosystem.

Why the Pentagon Wants to Destroy Anthropic | The Ezra Klein Show [Link]

Takeaways:

- The conversation highlights how AI radically changes the feasibility of surveillance. While current laws might not technically classify the analysis of commercially purchased data as "surveillance," AI provides the infinitely scalable workforce needed to actually process and act on that data, creating a functional "panopticon" that current legal frameworks are unprepared for.

- A core theme is that training an AI is a philosophical and political

act.

- Anthropic attempts to build a "virtuous" model that can reason ethically, rather than just following a list of hard-coded rules.

- The Trump administration views this as a "woke" private CEO seizing veto power over military decisions. Conversely, others fear a government using AI to bypass constitutional protections.

“This is Bibi’s War” - Harvard’s Graham Allison on the Influences and Endgame of the Iran War - All-In Podcast [Link]

They're Opening the Stock Market to Everyone. Here's What That Actually Means - All-In Podcast [Link]

Elon Musk: The Economy Will Be 10x the Size in 10 Years - Peter H. Diamandis [Link]

Takeaways:

- Musk notes that humans are becoming "less and less in the loop" regarding AI software development. Successive models are increasingly built by their predecessors. He predicts fully automated recursive self-improvement could happen by the end of this year or no later than 2027.

- Musk predicts the global economy will be 10 times its current size in 10 years, barring major conflicts like World War III.

- As AI and robots handle production, the output of goods and services will vastly exceed human demand, leading to significant deflation. Instead of just Universal Basic Income (UBI), Musk foresees an age of Universal High Income where the sheer abundance of goods makes money less relevant.

- Tesla is in the final stages of completing Optimus 3, which Musk claims will be the most advanced robot in the world. Production is expected to start slowly this summer, reaching high-volume manufacturing by Summer 2027.

- Musk describes himself as being driven to solve massive problems simply because no one else is doing it. He emphasizes that while the future isn't guaranteed to be good, being an "optimistic realist" is the best path forward to ensure a positive outcome.

Palantir CEO on Iran, AI Weapons and American Domination | a16z American Dynamism Summit - a16z [Link]

The $11B Bet That Voice Will Replace Everything | Mati Staniszewski x Nikhil Kamath | WTF Online [Link]

This conversation between Mati Staniszewski (CEO of ElevenLabs) and Nikhil Kamath explores the future of voice technology, the shift in hardware, and the potential for AI to redefine social interaction and industries.

Takeaways:

- Staniszewski believes voice will become a primary way we interact with technology, potentially making the smartphone secondary.

- Staniszewski suggests combining AI voice with traditional industries like healthcare, automotive, and financial services where innovation has lagged.

- Nikhil Kamath expressed that current social media is "broken" due to

algorithms that prioritize negative emotions and a lack of organic

content. They brainstormed a new social platform that:

- Uses voice-first interactions and AI companions to summarize feeds.

- Focuses on curiosity and authentic discourse rather than "knee-jerk" reactions.

- Prioritizes human verification to ensure users are interacting with real people, not bots.

How Claude Code Works - Jared Zoneraich, PromptLayer - AI Engineer [Link]

Iran War, Oil Shock, Off Ramps, AI's Revenue Explosion and PR Nightmare - All-In Podcast [Link]

Takeaways:

The podcast highlighted staggering growth numbers for the leading AI labs:

Anthropic: Reported a $14 billion revenue run rate, growing from $1 billion in just 14 months. Brad Gerstner noted they had a $6 billion month in February.

OpenAI: Ended 2025 at a $20 billion annualized run rate.

Experimental vs. Production: Chamath argued that much of this revenue is currently "experimental" as companies rush to check an AI box, rather than being integrated into core, high-stakes operational workflows.

Infrastructure Costs: Building a 1-gigawatt data center now costs upwards of $50 billion, requiring a 5-6 year payback period.

The hosts criticized AI CEOs for "scaring the bejesus" out of the public, leading to low popularity ratings:

- AI optimism is high in China (~80%) but remains low in the US (~30%).

- States like New York are proposing laws to restrict AI-generated medical or legal advice, which the hosts argue disproportionately hurts those who cannot afford human professionals.

- Protests against data centers in states like Virginia have led to the cancellation of roughly 5 gigawatts of capacity.

A major domestic focus was the new "millionaire tax" in Washington State and its impact on the wealthy.

- The Starbucks founder moved to Miami the same day the 9.9% extra tax on income over \(\$1M\) was passed in Washington.

- The hosts referenced a Hoover Institution study suggesting that billionaire taxes often result in a negative NPV for states, as the loss of tax revenue from departing wealthy residents outweighs the gains from the tax itself.

Why A.I. Is Making You Exhausted - Hard Fork [Link]

Takeaways:

- AI in Warfare

- The U.S. and Israeli militaries are using AI to process massive amounts of surveillance data (drones, hacked traffic cameras) to "shrink the haystack" and identify targets faster

- Anthropic's Claude is reportedly the only AI model currently deployed inside classified military systems

- Iran has shifted tactics by targeting data centers (like AWS in the UAE) and fiber optic cables, recognizing them as critical military and economic vulnerabilities.

- AI Brain Fry

- Mental fatigue caused by the "excessive oversight" of AI tools. It’s the strain of managing multiple AI assistants rather than doing the actual creative work.

- Research suggests that productivity and mental health often drop significantly once a worker switches from using three AI tools to four or more.

Dylan Patel — The single biggest bottleneck to scaling AI compute - Dwarkesh Patel [Link]

Takeaways:

- Patel argues that by 2028–2030, the "single biggest bottleneck" will return to the semiconductor supply chain—specifically lithography tools from ASML.

- Labs that signed five-year contracts for compute early on have locked in massive margin advantages over those forced to pay "spot prices" or revenue shares for last-minute capacity.

- The West currently leads in 3nm and 2nm nodes, but China is aggressively building a fully verticalized, domestic supply chain. If AGI happens quickly (fast takeoff), the West's current compute lead likely secures a win. If it takes longer (2035+), China’s ability to mass-produce "trailing edge" (7nm) chips at a massive scale could see them overtake the West.

- Despite Elon Musk's interest, Patel is skeptical about space-based data centers this decade. GPUs and networking transceivers are "horrendously unreliable." Managing failures and RMAs in orbit is currently impractical.

- Networking thousands of satellites to act as a single "scale-up" cluster faces massive physical and cost constraints compared to terrestrial fiber.

- Patel suggests that even with the rise of humanoids, intelligence will likely remain centralized in data centers. Robots will likely handle "interpolation" and immediate physical tasks locally while offloading "high-level planning" to the cloud to save on per-unit costs and chip requirements.

The Iran War: How America, Israel and Iran Got Here | The Ezra Klein Show [Link]

Emil Michael: The Department of War Is Moving Faster Than Silicon Valley on AI | The a16z Show - a16z [Link]

Marc Andreessen: The World Is More Malleable Than You Think - David Senra [Link]

Takeaways:

- Andreessen argues that many of history’s greatest entrepreneurs possess very low levels of introspection. Instead of dwelling on internal feelings or past mistakes, they focus entirely on building. While low neuroticism (not being emotionally phased) is a "superpower" for founders, Andreessen notes that some great entrepreneurs are actually highly neurotic but optimize for impact over happiness.

- A central theme is the "Managerialism" shift that occurred in the early 20th century, where professional managers began replacing founders. Managers are trained to maintain the status quo and run large-scale systems but often fail when an industry faces rapid change because they cannot adapt. Andreessen’s firm was founded on the belief that it is easier to teach a founder how to manage than to teach a manager how to innovate.

- Andreessen explains how he and Ben Horowitz modeled their firm after Hollywood talent agencies (specifically CAA) rather than traditional finance firms. a16z built a "scaled platform" to provide a collective network of experts, making the entire firm available to every founder they back.

- Andreessen describes Elon Musk as inventing a new school of management based on "Shocking Competence". Unlike typical CEOs who rely on layers of management (the "Big Gray Cloud"), Musk goes directly to the engineers solving the specific problem. Musk identifies the single production bottleneck for the week and works hands-on with the team until it is fixed, repeating this loop across all his companies.

- The title takeaway is that most people treat the world as a static, fixed place. Andreessen contends that the world is actually highly malleable. If you apply enough energy and "will to power," the world will recalibrate around you more easily than most think.

Two Legendary Founders: Travis Kalanick & Michael Dell Live from Austin, Texas - All-In Podacst [Link]

Takeaways:

- Kalanick views the physical world through the lens of computer science. Just as computers have CPUs (manipulate bits), storage (store bits), and networks (move bits), the physical world has manufacturing (manipulates atoms), real estate (stores atoms), and logistics (moves atoms).

- Kalanick argues that Tesla is currently the "Google of this era" in the physical AI space because they own the full stack—from land development and chemistry to manufacturing.

- Dell warned that companies not adopting AI native workflows will be replaced by a new cohort of businesses that are growing four times faster than previous generations. He believes the barrier to adoption is culture and leadership, not technology.

- A new legislative initiative where every child born in America

(starting in 2027) will receive an investment account at birth.

- Michael and Susan Dell announced a massive philanthropic pledge of \(\$6.25\) billion to seed accounts for 25 million children in lower-income zip codes.

- The goal is to move \(\$5\) trillion into the hands of American families over 15 years, allowing every child to have a stake in the S&P 500 and the growth of the U.S. economy.

Jensen Huang: Nvidia's Future, Physical AI, Rise of the Agent, Inference Explosion, AI PR Crisis - All-In Podcast [Link]

Takeaways:

- Huang emphasizes that we are moving beyond simple chatbots to

agentic systems—AI that can use tools, access memory, and perform actual

work.

- Computing is being "disaggregated." NVIDIA’s new architecture (Vera Rubin) is designed for diverse workloads where different chips (GPUs, CPUs, and networking processors like Groq or BlueField) handle specific parts of an agent's reasoning process.

- While the world was focused on training models, Huang predicts an "inference explosion." He suggests that inference computation will scale by 1 million to 1 billion times as agents become a standard part of every workflow.

- Huang views AI data centers not just as clusters of servers, but as

AI Factories.

- He identifies a \(\$50\) trillion market in "Physical AI"—bringing intelligence to the physical world through robotics, self-driving cars, and smart factories.

- Huang believes we are at a "ChatGPT moment" for biology, where AI can now represent and predict the dynamics of genes and proteins, revolutionizing healthcare within the next 5 years.

- A major highlight of the discussion is OpenClaw, an open-source

agentic system.

- Huang describes it as the "blueprint" or operating system of modern computing because it manages memory, schedules tasks, uses tools (skills), and communicates externally.

- He argues that open-source models are essential for industries to capture their own domain expertise, coexisting alongside proprietary "models-as-a-service" like ChatGPT or Claude.

- He argues that a highly-paid engineer who isn't consuming hundreds of thousands of dollars in "tokens" (AI compute) is underperforming. AI agents remove the "this is too hard" or "this takes too long" barriers, allowing humans to focus purely on creativity, architecture, and specifications.

- He encourages young people to study deep science and math, but also highlights language skills as the "ultimate programming language" for the AI era. Huang's core message to the next generation is: "Be the expert of using AI". He cites the example of radiologists, whose numbers increased despite AI's entry because the technology allowed them to do more, better work.

Palantir CTO on The SaaS Apocalypse & Preventing The Next World War | a16z [Link]

Takeaways:

Sankar provides a rubric for surviving the shift toward AI in software:

- "Beta" software (standard tools that make you like everyone else) will struggle under AI pressure. "Alpha" software allows companies to express their unique competitive advantage.

- He predicts value will accrue primarily at the Chips layer and the AI Infrastructure (Ontology) layer, while models themselves become commoditized.

- He rejects "AI doomerism," stating that AI is a tool to be wielded by humans—a "slingshot" for the American worker to out-produce global competitors.

Marc Andreessen & Ben Horowitz on a16z’s New Media Strategy - a16z [Link]

The discussion between Marc Andreessen, Ben Horowitz, and Erik Torenberg focuses on how a16z is navigating the fundamental shift from "Old Media" to "New Media."

NVIDIA's $1 Trillion Prediction, Anthropic Beats OpenAI, Tesla vs. TSMC & The CS Job Collapse | 240 - Peter H. Diamandis [Link]

Jensen Huang: NVIDIA - The $4 Trillion Company & the AI Revolution | Lex Fridman Podcast #494 [Link]

How Matt Mahan Thinks He Can Save California - All-In Podcast [Link]

Takeaways:

- Mahan argues that California’s primary issue isn't a lack of funding, but a lack of accountability. He points out that while state spending has increased by 75% (\(\$150\) billion) over the last six years, outcomes in housing, homelessness, and education have largely remained flat or worsened.

- Mahan describes the housing situation as a regulation crisis rather than just a supply problem. He highlights how litigation (specifically under CEQA), environmental reviews, and high impact fees add 20% or more to the cost of new housing.

- Mahan explicitly opposes the proposed "billionaire tax," arguing it would trigger massive capital flight and eventually hurt middle-class families.

- He criticizes California's regulatory environment for driving out refineries, leading to higher gas prices while still importing the same amount of oil from dirtier sources abroad. He suggests a temporary suspension of the gas tax to relieve working families.

Anthropic's Generational Run, OpenAI Panics, AI Moats, Meta Loses Major Lawsuits - All-In Podcast [Link]

Takeaways:

- Anthropic is seen as hitting a major "heater" with products like Opus 4.6 and Computer Use. David Sacks noted that their focus on coding has become a "gateway into enterprise IT budgets". While still the revenue leader, OpenAI is facing challenges. Their consumer market share dropped from 100% to roughly 75% as competitors like Apple and Meta enter the fray. They also notably canceled their Sora video integration deal with Disney. Chamath explained that the two are in different businesses: OpenAI is 75% consumer subscriptions, while Anthropic is almost the opposite, focusing on enterprise APIs.

- Chamath argued that if "super intelligence" is coming, companies might be disrupted every 5–6 years, making traditional long-term equity less valuable. Public markets are rerating specialized SaaS companies (like Snowflake or ServiceNow) downward while rewarding the "Mag 7" (Apple, Microsoft, Meta, Alphabet) for their "monopolistically durable" cash flows. Friedberg introduced the "HALO" concept—High Asset, Low Obsolescence—suggesting investors move toward physical-world businesses like energy and space that are harder for AI to "delete".

- Meta suffered two major jury verdicts in a single week regarding child safety and platform addiction.

Once I Understood This About Investing, My Life Changed. - Chamath Palihapitiya [Link]

Suggestions:

- If you cannot justify a decision as your own, don't make it. Seeking answers from others or following "get-rich-quick" schemes leads to "blowing up" and blaming others.

- You must understand the risk of capital loss. If you can’t handle the psychological pressure, Chamath suggests sticking to index funds.

- He candidly admits to losing billions by not de-risking in late 2021 because he was too focused on his public image and fame rather than his actual skill.

- Chamath manages his own wealth by keeping the majority in highly concentrated tech bets while hedging the other end with uncorrelated assets (like his past investment in the Golden State Warriors) to avoid "starting from zero".

- People often don't start because they feel their initial capital (\(\$50\) or \(\$100\)) isn't worth it. Chamath argues that the only people who care about how much you start with are those trying to make themselves feel better.

- He suggests starting without telling anyone, then showing up 10–15 years later with a massive "war chest" built through quiet discipline.

Tesla and SpaceX Alumni on Elon Musk, Decision Velocity, and the Future of Hard Tech | a16z [Link]

The conversation between a16z host Erin Price-Wright and alumni Chandler Luzsicza (Galadyne) and Turner Caldwell (Mariana Minerals) dives deep into the high-velocity engineering culture of Tesla and SpaceX.

Takeaways:

- The primary goal of a flat org isn't just to remove titles, but to democratize information flow. Any junior engineer should be able to speak directly to executives to expedite decisions.

- You cannot wait for 100% of the information to make a move. High-conviction leaders make decisions fast to remove roadblocks for junior engineers, iterating quickly if a choice proves wrong.

- Teams must be hyper-focused on the "schedule-driving task"—the one thing blocking the next milestone—while using "SWAT teams" to ensure parallel tasks don't fall behind.

- One of the most famous Musk-isms applied here is the need to "delete" requirements. Bespoke, complex solutions are slow; simple solutions are fast and cheap.

- Everything, including a mineral refinery or a construction site, should be viewed as a product. Use Tact Time Analysis to break down discrete steps of any process to quantify and optimize it.

- Don't vertically integrate just to save costs. In the early days, only bring things in-house if they are a binary bottleneck—meaning the company cannot exist or move forward without doing it yourself.

- Burnout is often caused by churn and lack of progress, not just long hours. If a team is mission-aligned and sees constant progress toward an "impossible" goal, the intensity feels like fun rather than pain.

Advice:

- Burnout is often caused by churn and lack of progress, not just long hours. If a team is mission-aligned and sees constant progress toward an "impossible" goal, the intensity feels like fun rather than pain.

- Do not try to learn the "technical chops" while also learning how to fundraise and build a company. Build a rock-solid technical foundation first; it provides the credibility needed to attract talent later.

Bryan Johnson: I Just Took the Most Powerful Dose of DMT in the World... Here's What It Was Like - All-In Podcast [Link]

Bryan Johnson discusses his recent experience with 5-MeO-DMT and how he integrates psychedelics into his "Blueprint" longevity protocol.

Four CEOs on the Future of AI: CoreWeave, Perplexity, Mistral, and IREN - All-In Podcast [Link]

Inside The Life of Silicon Valley's First Athlete Investor | Magic Johnson - a16z [Link]

Pain, Power & The Game Nobody Wins | Chamath Palihapitiya x Nikhil Kamath | People by WTF [Link]

Takeaways:

- Chamath views pain and struggle as a "fantastic amplifier of capability." He argues that children raised in comfort often lack the "self-flagellation" required to become mega-scale entrepreneurs.

- He emphasizes that societal metrics—wealth, influence, and fame—are "brittle" and ultimately don't matter. Real success is the internal sense of evolution as a human being.

- Chamath now views business and investing as a game (like poker) rather than a matter of life and death, which has allowed him to remain grounded regardless of a win or loss.

- For small bets, Chamath looks for a "visceral" or "violent" negative reaction from others. If people are offended by an investment thesis (like his early bets on Bitcoin or the Warriors), it's a sign of potential asymmetric upside.

- He believes successful investing is not a team sport. It requires coming to one's own conclusions independently